Organizations often have large volumes of documents containing valuable information that remains locked away and unsearchable. This solution addresses the need for a scalable, automated text extraction and knowledge base pipeline that transforms static document collections into intelligent, searchable repositories for generative AI applications.

Organizations can automate the extraction of both content and structured metadata to build comprehensive knowledge bases that power retrieval-augmented generation (RAG) solutions while significantly reducing manual processing costs and time-to-value. The architecture not only demonstrates the processing of 500 research papers automatically, but also scales to handle enterprise document volumes cost-effectively through the Amazon Bedrock batch inference pricing model.

Amazon Bedrock batch inference is a feature of Amazon Bedrock that offers a 50% discount on inference requests. Although Amazon Bedrock schedules and runs the batch job (needing a minimum of 100 inference requests) as capacity becomes available, the inference won’t be real-time. For use cases where you can accommodate minutes to hours of latency, Amazon Bedrock batch inference is a good option.

This post demonstrates how to build an automated, serverless pipeline using AWS Step Functions, Amazon Textract, Amazon Bedrock batch inference, and Amazon Bedrock Knowledge Bases to extract text, create metadata, and load it into a knowledge base at scale. The example solution processes 500 research papers in PDF format from Amazon Science, extracts text using Amazon Textract, generated structured metadata with Amazon Bedrock batch inference and the Amazon Nova Pro model, and loads the final output, including Amazon Bedrock Knowledge Base filter, into an Amazon Bedrock Knowledge Base.

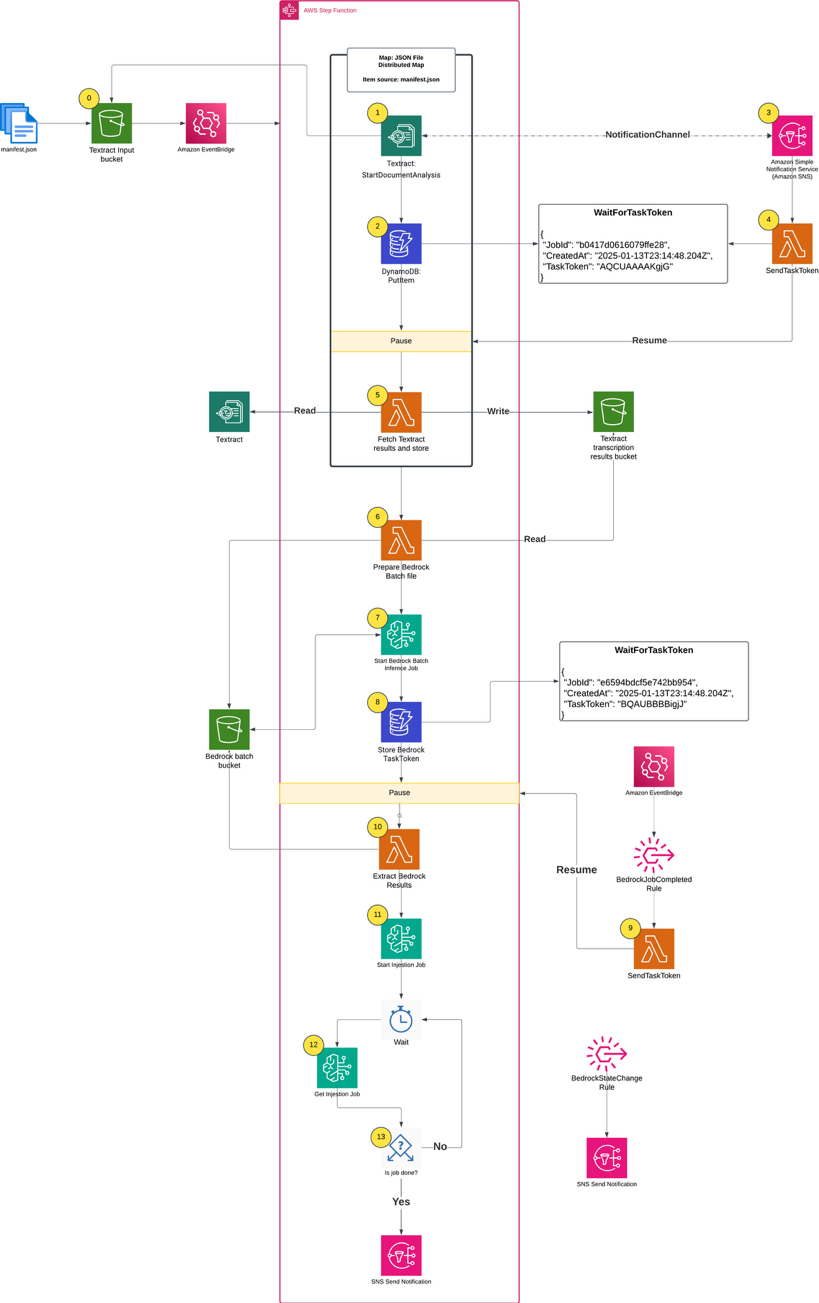

This solution uses Step Functions with parallel Amazon Textract job processing through child workflows run by Distributed Map. You can use the concurrency controls offered by Distributed Map to process documents as quickly as possible within your Amazon Textract quotas. Increasing processing speed necessitates adjusting your Amazon Textract quota and updating the Distributed Map configuration. Amazon Bedrock batch inference handles concurrency, scaling, and throttling. This means that you can create the job without managing these complexities.

In this example implementation, the solution processes research papers to extract metadata such as:

The high-level parts of this solution include:

Figure 1. Complete architecture diagram

The following prerequisites are necessary to complete this solution:

The complete solution uses AWS CDK to implement two AWS CloudFormation stacks:

First, clone the GitHub repository into your local development environment and install the requirements:

git clone https://github.com/aws-samples/sample-step-functions-batch-inference.git .

cd sample-step-functions-batch-inference

npm install

Next, deploy the solution using AWS CDK:

cdk deploy --all

After deploying the cdk stacks, upload your data sources (PDF files) into the AWS CDK-created Amazon S3 input bucket. In this example, I uploaded 500 Amazon Science papers. The input bucket name is included in the AWS CDK outputs:

Outputs:

SFNBatchInference.BatchInputBucketName = sfnbatchinference-batchinputbucket11aaa222-nrjki8tewwww

The process begins when you upload a manifest.json file to the input bucket. The manifest file lists the files for processing, which already exist in the input bucket. The filenames listed in manifest.json define what constitutes a single processing job run. To create another run, you would create a different manifest.json and upload it to the same S3 bucket.

The AWS CDK definition for the input bucket includes Amazon EventBridge notifications and creates a rule that triggers the Step Functions workflow whenever a manifest.json file is uploaded.

The first step in the Step Functions workflow is a Distributed Map run that performs the following actions for each PDF in the manifest file:

A key component of this architecture is the callback pattern that Amazon Textract supports using the NotificationChannel option, as shown in the preceding figure. The AWS CDK definition the Step Functions state that starts the Amazon Textract job is shown in the following.

The Lambda function that handles task tokens extracts the Amazon Textract JobId from the Amazon SNS message, fetches the TaskToken from DynamoDB, and resumes the Step Functions Workflow by sending the TaskToken:

The Distributed Map runs up to 10 child workflows concurrently, controlled by the maxConcurrency setting. Although Step Functions supports running up to 10,000 child workflow executions, the practical concurrency for this solution is constrained by Amazon Textract quotas. The startDocumentAnalysis API has a default quota of 10 requests per second (RPS), which means you must consider this limit when scaling your document processing workloads and potentially request quota increases for higher throughput requirements.

When all of the Amazon Textract jobs finish, the Distributed Map state creates an Amazon Bedrock batch inference input file, launches the Amazon Bedrock inference job, and waits for it to complete.

A batch inference input is a single jsonl file with multiple entries such as the following example. The prompt in each inference request instructs the large language model (LLM) to analyze the paper and extract metadata. Read the full prompt template in the GitHub repository.

After the batch inference completes, the workflow does the following:

The following shows the example metadata format:

After the workflow completes successfully, you can test the knowledge base to verify that the documents and metadata have been properly ingested and are searchable. There are two practical methods for testing an Amazon Bedrock Knowledge Base:

The Console provides an intuitive interface for testing your knowledge base queries with metadata filters:

Enter a natural language query related to your documents, for example: “Recent research on retrieval augmented generation?”

The console displays the generated response along with source attributions showing which documents were retrieved and used to formulate the answer, filtered by your specified metadata attributes, as shown in the following figure.

For programmatic testing and integration into applications, use the AWS SDK with metadata filtering. The following is a Python example using boto3:

The following is the test script output:

This solution demonstrates how to combine multiple AWS AI and serverless services to build a scalable document processing pipeline. Organizations can use AWS Step Functions for orchestration, Amazon Textract for document processing, Amazon Bedrock batch inference for intelligent content analysis, and Amazon Bedrock Knowledge Bases for searchable storage. In turn, they can automate the extraction of insights from large document collections while optimizing costs.

Following this solution, you can build a solid foundation for production-scale document processing pipelines that maintain the flexibility to adapt to your specific requirements while making sure of reliability, scalability, and operational excellence. Follow this link to learn more about serverless architectures.