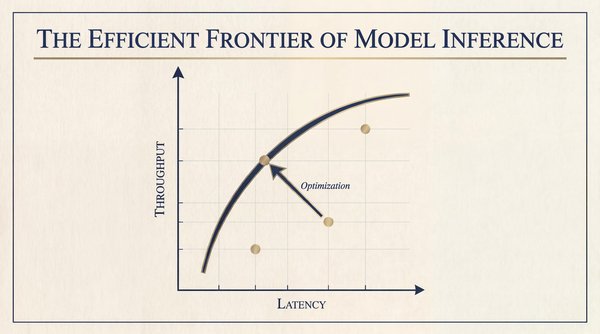

Every dollar spent on model inference positions you on a graph representing latency and throughput, where an optimal performance curve exists, known as the efficient frontier.

With a fixed hardware budget, you can balance latency against throughput, but improving one often means sacrificing the other unless the frontier itself expands. This article highlights two key dynamics: optimizing your current setup and adapting to ongoing advancements in technology.

Understanding the Efficient Frontier

Each LLM request undergoes two computational phases, each with unique bottlenecks:

- Prefill (Compute-Bound): The GPU processes the entire input prompt simultaneously, building the key-value (KV) cache for the attention mechanism. This phase is efficient, leveraging batch processing for rapid matrix multiplications.

- Decode (Memory-Bandwidth-Bound): Tokens are generated one at a time, requiring the GPU to fetch model weights and the KV cache from high-bandwidth memory (HBM). This sequential process creates a bottleneck, highlighting the need for trade-offs.

The Two Axes of Inference

The efficient frontier of LLM inference focuses on:

- Latency (X-Axis): Measured by Time to First Token (TTFT) and Time Between Tokens (TBT).

- Throughput (Y-Axis): Total tokens processed per second across users.

Cost serves as the underlying constraint for this graph. Enhancing your hardware budget or benefiting from new breakthroughs can shift the entire frontier outward.

Five Techniques to Reach the Frontier

Many production systems currently operate below the efficient frontier. Here are five techniques to optimize performance:

1. Semantic Routing Across Model Tiers

Not every task requires a complex model. Simple tasks can be directed to smaller, more efficient models, enhancing throughput without compromising quality.

2. Prefill and Decode Disaggregation

Separating the prefill and decode phases onto distinct hardware allows each to operate at its optimal capacity, reducing resource underutilization.

3. Quantization: Trading Precision for Speed

Reducing model weights from FP16 to INT8 or INT4 can significantly decrease memory usage and improve speed, although it may impact output quality. Advanced techniques can mitigate this tradeoff.

4. Context Routing

Implementing context-aware routing allows for efficient reuse of the KV cache, drastically reducing computation time and costs when multiple users request similar information.

5. Speculative Decoding

This technique utilizes a draft model to generate candidate tokens, allowing the primary model to verify them all at once, effectively overcoming memory bandwidth limitations.

Case Study: Vertex AI's Approach

The Vertex AI team improved their inference performance by adopting the GKE Inference Gateway, which introduced intelligent routing strategies:

- Load-aware routing: Real-time metrics help route requests to the fastest available pod.

- Content-aware routing: Requests are directed to pods that already contain the relevant context in their KV cache, avoiding costly recomputation.

This architecture led to significant performance gains, including a 35% reduction in TTFT and improved cache efficiency.

The Bottom Line

LLM inference operates within an efficient frontier that balances latency and throughput. By applying the techniques outlined, you can enhance your system's performance and adapt to ongoing advancements in the field. Staying proactive in optimizing your infrastructure will ensure you remain competitive in inference economics.