In banking, resolving a customer issue is rarely simple. Cases like fraud or blocked payments require strict adherence to complex procedures across multiple teams. When systems fall short, customers are passed between teams, wait in queues, and face delays at moments when the stakes are highest.

Gradient Labs(opens in a new window) is built to handle this complexity. The London-based company is building AI agents that give every bank customer the experience of a dedicated account manager. Founded by a team that previously led AI and data efforts at Monzo, the company’s platform is built on OpenAI models and is now shifting production traffic onto GPT‑5.4 mini and nano.

“We’re seeing 500-millisecond latency with GPT‑5.4 mini and nano, which is exactly what we need for natural voice conversations,” says Danai Antoniou, Co-Founder and Chief Scientist at Gradient Labs. “We’re moving a significant portion of our workload over.”

> “We needed three things simultaneously: accuracy at instruction-following, low hallucination rates, and function-calling reliability, all under voice latency constraints. OpenAI was the only provider that passed on all three.”

Danai Antoniou, Co-Founder and Chief Scientist at Gradient Labs

## Moving from SOPs to real-time systems

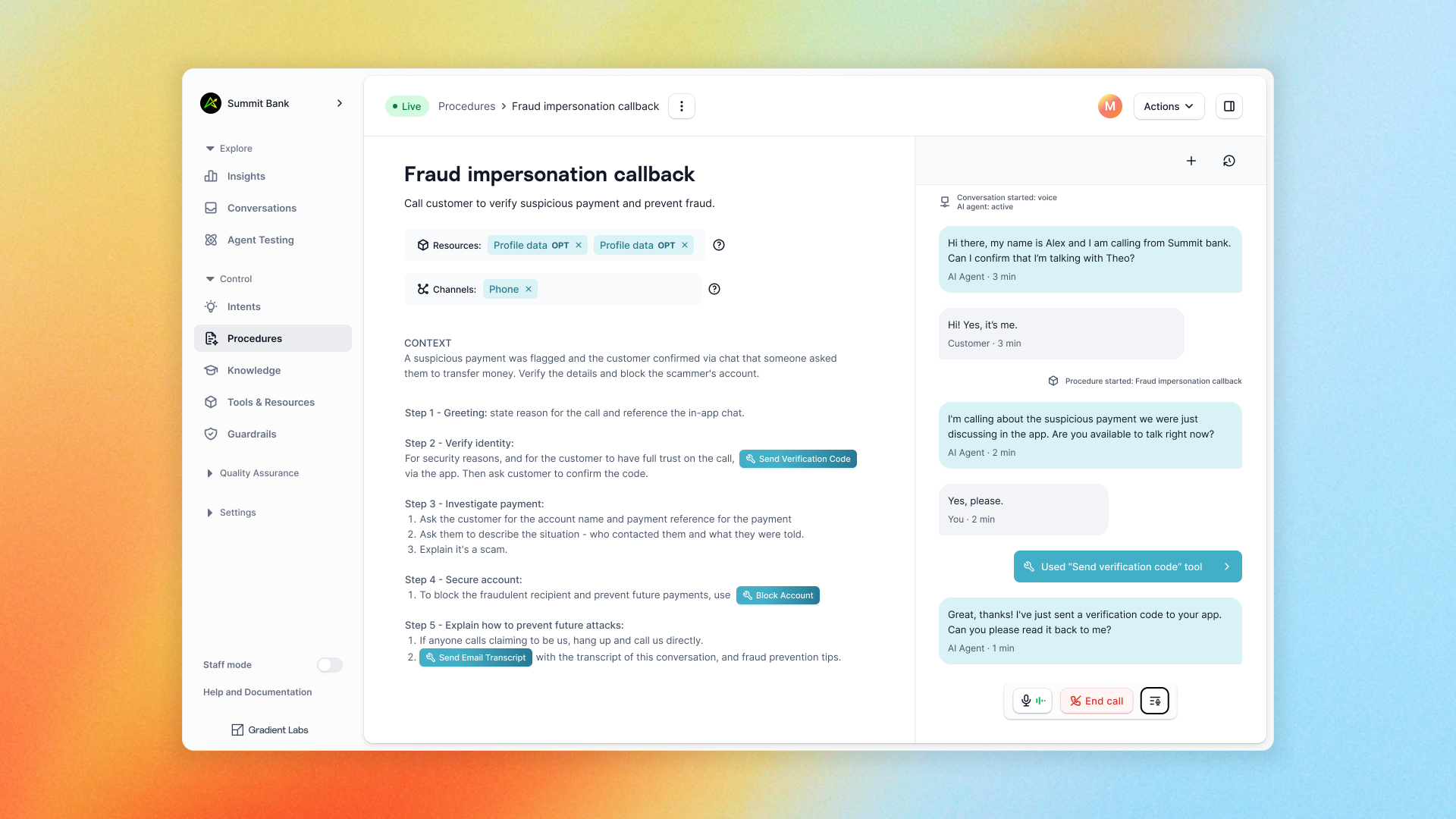

In banking, customer interactions are governed by standard operating procedures (SOPs) that define what should happen at each step.

A typical customer interaction might look like this:

1. A customer calls to report a stolen card. 2. The system verifies their identity, handling corrections and interruptions in real time. 3. Once verified, it freezes the card and initiates a replacement. 4. It answers follow-up questions, such as delivery timing, and suggests next steps.

Each step follows a defined procedure, with decisions made in real time based on user input, context, running guardrails, and both customer and agent responses to ensure compliance.

“The model needs to maintain procedure state across interruptions, backchannels, and topic switches while keeping response generation fast,” says Antoniou. “Most providers couldn't even attempt it.”

Gradient Labs benchmarks providers on their most challenging procedures and evaluates them on what they call _trajectory accuracy_: whether the system follows the correct path from start to finish.

In one of their initial evals, GPT‑4.1 was the only model to hit 97% trajectory accuracy and consistency. The next closest provider was 88%.

“In financial services, that’s the difference between resolving a call and creating a compliance incident,” Antoniou says.

This result shaped how Gradient Labs designed its system. The team built a hybrid architecture that uses OpenAI models for reasoning-intensive steps and smaller models for faster, deterministic tasks, with routing that adapts based on complexity and latency constraints.

Internally, the system is composed of specialized skills orchestrated by a central reasoning agent, allowing complex cases to move across workflows without losing context.

For every interaction, 15+ guardrail systems run in parallel to ensure conversations stay within defined procedures and compliance boundaries, including financial advice detection, vulnerability signals, complaints, and attempts to bypass verification or access sensitive data.

## Proving reliability in high-risk environments

Financial institutions don’t deploy systems like this on faith. They need to see, step-by-step, that it behaves correctly under real-world conditions.

“You have to architect from the ground up for no hallucinations,” says Antoniou. “That needs to be the guiding principle as you’re building.”

To evaluate both new and existing models, the team replays real customer conversations and compares the system’s behavior against the expected procedure. They also generate synthetic conversations to test edge cases and rare scenarios before anything is deployed.

Gradient Labs also gives teams control over how the system is introduced. They analyze historical support data to map out the types of customer issues a bank handles and how often they occur. Teams can then choose which categories the AI should handle, starting with lower-risk workflows and expanding over time.

Before going live, customers can simulate conversations to review how the system responds across different scenarios, building confidence that it behaves as expected.

Deployment typically begins with a small percentage of traffic, with continuous monitoring and automated checks flagging conversations that may require human review. Over time, coverage expands as the system demonstrates consistent performance.

## Showing impact on day one, and the path ahead

Gradient Labs’ customers report CSAT scores as high as 98%, in some cases outperforming their best human agents. Most deployments start with over 50% resolution rates on day one, even for complex workflows like disputes, account verification, and fraud.

That impact is reflected in the company’s growth. Gradient Labs has increased revenue more than 10x over the past year, expanding from inbound support into outbound and back-office processes.

Looking ahead, Gradient Labs is focused on systems that can carry context across interactions: understanding a customer’s history, tracking ongoing issues, and picking up where previous conversations left off. This direction is closely aligned with how Gradient Labs thinks about its long-term partnership with OpenAI.

> “We’re not just choosing a model for today. We’re building on a platform where we see the trajectory of reasoning models going in the same direction as our product.”

Danai Antoniou, Co-Founder and Chief Scientist at Gradient Labs

As models continue to improve, the range of procedures that can be safely automated expands. For Gradient Labs, that means moving closer to a system where every customer interaction is handled with the same consistency, judgment, and continuity as a top-tier human agent.

## OpenAI <3 startups

Join the communityStart building(opens in a new window)

How Descript enables multilingual video dubbing at scale Startup Mar 6, 2026

Inside Praktika's conversational approach to language learning Startup Jan 22, 2026

How Higgsfield turns simple ideas into cinematic social videos API Jan 21, 2026

Our Research * Research Index * Research Overview * Research Residency * OpenAI for Science * Economic Research

Latest Advancements * GPT-5.3 Instant * GPT-5.3-Codex * GPT-5 * Codex

Safety * Safety Approach * Security & Privacy * Trust & Transparency

ChatGPT * Explore ChatGPT(opens in a new window) * Business * Enterprise * Education * Pricing(opens in a new window) * Download(opens in a new window)

Sora * Sora Overview * Features * Pricing * Sora log in(opens in a new window)

API Platform * Platform Overview * Pricing * API log in(opens in a new window) * Documentation(opens in a new window) * Developer Forum(opens in a new window)

For Business * Business Overview * Solutions * Contact Sales

Company * About Us * Our Charter * Foundation(opens in a new window) * Careers * Brand

Support * Help Center(opens in a new window)

More * News * Stories * Livestreams * Podcast * RSS

Terms & Policies * Terms of Use * Privacy Policy * Other Policies

(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)

OpenAI © 2015–2026 Manage Cookies

English United States